I Built a System That Creates YouTube Videos From Scratch part 3. What If the AI Didn't Just Write the Script — But the Code Behind Every Scene?

by Iliya OblakovPublished on January 1, 197017 min read0 views

That menu was the new ceiling.

Here's what I built next. And honestly? This one is the weirdest thing I've shipped.

**[Watch the 3rd live iteration in action](https://youtu.be/lOfUFrNG_W4)**

---

## The Problem With "Generated From the Script"

In v2, every visual came from the script. Sounds great. But "from the script" was a polite way of saying "the script picks one of twelve scene types, fills in some props, and the renderer plays a pre-built React component." If the script said `[CODE_CARD]` you got the code card. If it said `[TERMINAL]` you got the terminal. If it said `[KINETIC_TEXT]` you got kinetic text.

That's not generation. That's selection.

And the moment you watch a 15-minute video, that limitation shows up as repetition. Every code moment looks like every other code moment. Every diagram has the same scaffolding. The pacing is decent because the script controls timing, but visually you're cycling through the same twelve cards over and over.

Stock footage felt generic for one reason. Hand-coded scene types felt generic for the exact same reason — both are fixed inventory.

The fix that kept nagging at me was obvious and uncomfortable: **stop building scene types. Let an AI write each scene's React component live, every time, fresh.**

So that's what I did.

---

## What's Actually Happening Now

Every time I render a video, the pipeline does something that still feels strange to type out.

For each scene in the script, it:

1. Builds a structured **directive** — a JSON blueprint describing the scene (mood, narration, highlight words, design tokens, layout contract, allowed APIs, props schema).

2. Sends that directive to **Gemini 3.1 Pro Preview** with a hard prompt: write a single TSX file that implements this scene as a Remotion React component.

3. Runs the generated TSX through a **5-stage validation gauntlet** — imports, forbidden patterns, audio frame-offset bug, layout contract, full TypeScript compile.

4. If anything fails, the failure messages are shoved back into the next prompt with "fix these errors and re-emit the file." Up to N retries.

5. The validated component lands at `Scene_<id>.tsx` in a per-video output directory.

6. A registry file tells Remotion which generated component to mount for which scene at runtime.

The renderer then bundles everything and plays back a video where **every scene is a React component the model wrote that day, for that script, with that narration, scoped to that mood.**

There are no scene types anymore. There's just a directive and a model.

---

## The Directive Is Doing Most of the Work

I want to make a point I didn't fully appreciate until I shipped this: **the model is not the clever part. The directive is.**

A directive is a tight contract. Excerpts of what gets passed in:

- `scene_id`, `duration_frames`, `fps` — when this thing has to start, end, and at what frame rate.

- `narration` and `highlight_words` — what the narrator is saying, and which phrases need to pop on screen.

- `mood` — `urgent`, `serious`, `curious`, `excited`, `reflective`, `neutral`. Drives the visual energy.

- `design_system` — explicit colors, fonts, accent values. Not "look modern." Specific hex codes.

- `props_schema` — the literal TypeScript type of `props.scene.X` the component is allowed to read. If the field isn't in the schema, the component can't access it.

- `visual_contract` — the layout mode (`hero`, `split`, `stack`, `radial`, etc.) and background treatment (`grid`, `gradient`, `noise`). Locked. The validator enforces it.

- `constraints` — a list of about 30 hard rules.

That last one is where the system actually lives. Every constraint in that list exists because something failed in the past. A few examples:

- "**Never use `Math.random()`, `Date.now()`, or `performance.now()`.** Components must be deterministic — Remotion re-renders every frame, so non-deterministic calls cause elements to jump 30 times per second. If you need pseudo-randomness, import `random` from `remotion` and seed it with `props.scene.id`."

- "**Never call `useVideoConfig().durationInFrames`.** That returns the WHOLE VIDEO's length, not this scene's. Use `props.scene.durationFrames` directly. Inside a `<Sequence>`, useVideoConfig still returns the parent composition's metadata, so this trap silently produces motion that completes in 1% of the visible time."

- "**Never hardcode absolute frame numbers** in `interpolate()` ranges. The pipeline runs at 30fps today but may upgrade to 60fps; absolute-frame math would silently double animation speed."

- "**You may only access scene fields listed in `props_schema.scene_fields`.**" If the model invents a field, the component crashes at runtime — so don't invent.

- "**Treat narration as design context, not display copy.**" Don't blit the whole sentence onto the screen. Derive a 2–5 word title or label from it. (The number of times a model will happily render a wall of text otherwise is wild.)

Every one of those rules is a scar. They are also what makes the model's output usable. Without that contract, you get a confident pile of TSX that looks plausible and renders into garbage.

---

## The Validation Gauntlet

The model writes TSX. Then five independent checks run before that file is allowed near the renderer.

**Check 1 — Imports.** Only allowed packages and local module paths can be imported. `remotion`, `react`, `@remotion/media-utils`, `lucide-react`, plus a handful of internal helpers. Anything else gets rejected.

**Check 2 — Forbidden patterns.** A regex bank that catches the most common failure modes: `className=`, `Math.random(`, `Date.now(`, `transition:`, `animation:`, `@keyframes`, `@font-face`, `<link rel="stylesheet"`, raw `<Img src={props.X.url}>` without `staticFile()`, `useVideoConfig().durationInFrames`, and a few others. Each pattern has a paired error message that explains the actual failure mode in plain English — that message is what gets fed back to the model on retry.

**Check 3 — Audio frame offset.** If the component samples narration audio for reactive UI, it has to call `getAudioPulse(audioData, scene.startFrame + frame, fps)`, not bare `frame`. Why? Because `useCurrentFrame()` inside a Remotion `<Sequence>` returns the LOCAL frame (0 within the scene), but the audio data is the GLOBAL track. Without the offset, your audio reactivity samples the start of the video instead of the current scene. This bug is invisible until you watch the render. There's a regex now.

**Check 4 — Layout contract.** The directive's `visual_contract.layout_mode` must literally appear as the `mode` prop on the root `<SceneLayout>`. If the contract says `split` and the component roots with `mode="hero"`, it's rejected. This stops the model from deciding to do its own thing.

**Check 5 — TypeScript compile.** A synthetic `tsconfig` is written that extends the project's main config and includes only the new file. `npx tsc --noEmit --project ...` runs against it. If anything fails — type mismatch, undefined symbol, broken import — the first 5 errors get summarized with surrounding lines and fed back to the next prompt.

Pass all five and the file gets written into `temp/videos/<video_id>/generated_components/Scene_<id>.tsx`. Fail and you retry. Fail the configured number of retries and the pipeline either fails the build or falls back, depending on `component_codegen_strict`.

That's the real loop. Generate → validate → feed errors back → retry. It's a tiny agent harness with a verifiable output and zero shared state between scenes.

---

## Caching, Because This Would Otherwise Be Slow

You don't want to call Gemini 3.1 Pro Preview 70 times per video on every render. So there's a fingerprint.

The fingerprint is a SHA-1 of:

- The directive (everything the model sees).

- The schema version.

- The contents of the reference example files.

- The contents of the prompt template.

- The contents of the styling helpers module.

- The provider name and model name.

Anything that could change the model's output is in the hash. If the same scene asks the same model for the same component with the same examples and the same prompt template, the result is reused from disk. Change the prompt template? Cache invalidates for every scene. Change the design system? Cache invalidates. That's the property you want.

Cache hits make iteration on the rest of the pipeline tolerable. I'm not paying for re-generation when I'm tweaking subtitles or audio.

---

## And When AI Components Aren't the Right Tool — Codex Images

UI is great for showing data, code, diagrams, kinetic text. UI is bad at being a sky. Or a city skyline. Or a moody portrait of a CPU die under blue rim light.

For scenes where the script wants atmosphere instead of structure, the pipeline takes a different route.

A directive in the script can include `[IMAGE_PROMPT: "tight macro shot of liquid mercury rolling over circuit board, deep navy lighting"]` plus an optional `[IMAGE_STYLE]`, `[IMAGE_ASPECT]`, `[IMAGE_ROLE]` (background or scene), and `[IMAGE_DIM]` (how dimmed it should sit behind narration text). The pipeline picks those up and routes that scene through OpenAI's Codex CLI running **GPT Image 2** instead of through Gemini.

The Codex image generator:

1. Computes a cache key from prompt + role + aspect + style + model + target dimensions.

2. Looks for a cached PNG. If found, uses it.

3. Otherwise calls `codex exec` with a sandboxed workspace and a strict prompt: write a PNG to this exact path, of these exact dimensions, on this subject, in this style.

4. Validates the output: PNG magic bytes, file size > 10 KB, normalized to target resolution, brightness gate (rejects pure-black or blown-out frames).

5. Stores the result in the cache and returns an asset record the renderer can mount.

The React component on the Remotion side is tiny — `AIImageScene.tsx`. It crossfades the image in over half a second, drifts a 1.0 → 1.02 scale across the scene for slow zoom, paints a top-and-bottom gradient over it so any title text stays readable, and fades out at the end. That's it. Simple and intentional.

The point is the **routing**: at runtime, every scene gets the right tool. Structured data, code, diagrams, and kinetic text go through the Gemini codegen path and end up as live React components. Atmosphere, photoreal context, and B-roll go through the Codex path and end up as scene-scoped image plates.

You stop arguing about "AI video as one thing." You compose two complementary AI surfaces, each playing its strongest move.

---

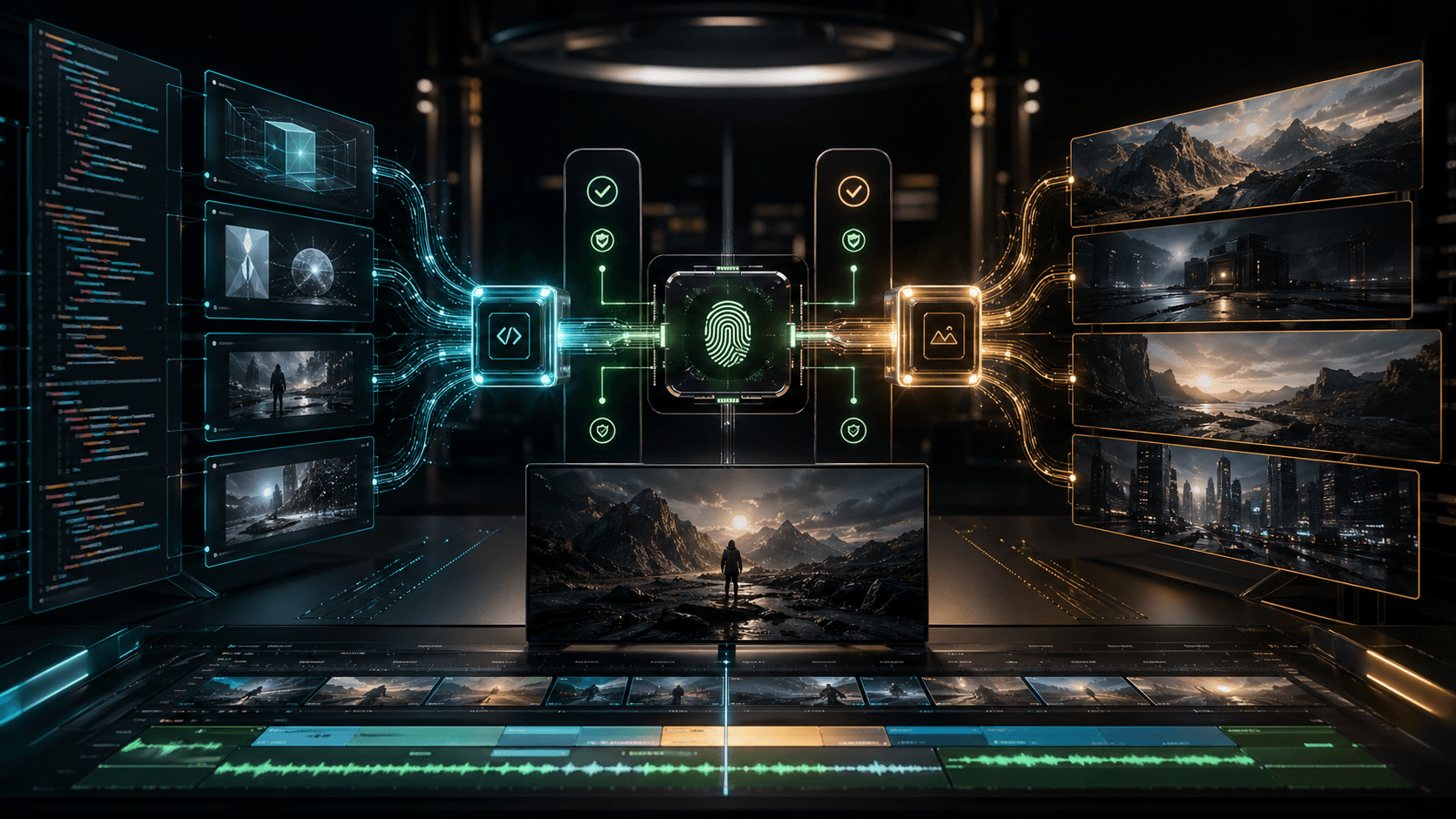

## The Marriage of the Two Surfaces

This is the part that took me a while to internalize. The first time a Codex-generated background image came up under a Gemini-generated React kinetic-text component, the result felt like a real production shot. Photoreal mood plate. Crisp interactive UI on top. Both made by AI, neither competing for the same job.

That's a category I haven't seen anyone else compose this way. Pure AI video tools generate pixels. Pure code-driven renderers generate UI. This pipeline runs both — directed by one script, validated by the same audio timeline, composited by the same Remotion runtime.

It's also where the pipeline finally stops looking like a hack and starts looking like a system.

---

## What the Channel Data Actually Says

I'd be lying to you if I said this iteration is already winning the algorithm. It's been live for less than a day on the latest video. Here's what the [Nano Atlas channel](https://www.youtube.com/@NanoAtlas) actually shows.

I pulled the raw analytics straight from YouTube Analytics API v2 minutes before writing this — through the same pipeline's own analytics module — for the **last seven videos**, all of which were produced by this pipeline.

**Channel snapshot (live API pull, 25 April 2026):**

- 253 subscribers, 33 total uploads, 41,009 total channel views.

- Last 7 pipeline-produced videos combined: **7,723 views, 347 watch hours, +42 net subscribers, 94 shares.**

- Average hook retention across those 7: **63.4%** (the first 10% of the video).

- Average mid-video retention: **21.9%** (the 45–55% mark).

- Average end retention: **14.5%** (the last 10%).

- Average relative retention vs comparable YouTube videos: **0.354**.

- Average view percentage: **24.9%** of the runtime.

**Top performers from the last 7:**

- "OpenAI and Anthropic Just Killed Prompt Engineering" — 2,896 views, 222 views/day, 27 net subs, 39 shares, 146 watch hours.

- "NVIDIA Just Exposed the Enterprise AI Scam" — 2,467 views, 88 views/day, 24 shares, 102 watch hours. (This was the v2 launch video.)

- "Anthropic Just Changed The Game: The True OpenClaw Killer" — 1,370 views, 80 views/day, 25 shares.

- "Google Just Changed AI Forever... (Gemma 4)" — 658 views, 61 views/day, **71% hook retention** (the strongest hook in the set).

**The new-pipeline launch:**

- Title: "OpenAI Just Killed the API (And No One's Noticed)"

- Live for ~17 hours at the time of writing.

- 28 views, 4 likes, **14.3% engagement rate** (small denominator, but well above the 1–3% range the prior six videos sit in).

- Retention curve isn't published by YouTube yet — too fresh. I'll update.

**The honest reading:**

- Hook retention at **63%** average across the last 7 means the **scripts are doing their job**. The 3-stage editorial pipeline (Writer → Reviewer → Director) plus the 7th-grade readability cap and anti-plagiarism gates are the load-bearing wall — viewers are sticking past the first 90 seconds.

- The drop from **63% hook to 22% mid** is the real bottleneck. Mid-video is where the visuals matter most — and it's exactly where v2's twelve fixed scene types started feeling repetitive. The whole reason v3 exists is to attack that drop.

- It's too early to declare a v3 win on retention alone — the new-pipeline video has been live for less than a day, and the prior six videos are bouncing around `0.28–0.42` relative retention. I'll come back with a follow-up once there's a 14-day window.

What I won't do is pretend the numbers are bigger than they are. 253 subs is small. 7,723 views over the last seven uploads is small. The pipeline matters not because it's already winning, but because every variable that could limit it has been moved into the loop. Visuals are no longer a fixed inventory. Photoreal is no longer a separate tool. Validation is mechanical. Caching is deterministic. Iteration compounds.

---

## What Changed Under the Hood (v2 → v3)

**v2:** ~12 hand-coded React scene types. Script directives selected which type to mount. Per-scene props customized the data inside a fixed shell.

**v3:**

- ~12 hand-coded scene types are gone from the codegen path entirely.

- **Gemini 3.1 Pro Preview** writes a fresh `Scene_<id>.tsx` for every scene that doesn't ship as a photoreal background.

- **OpenAI Codex CLI + GPT Image 2** generates per-scene PNG plates for `IMAGE_PROMPT` directives, validated by file-magic + brightness gate, mounted as `AIImageScene`.

- A 5-step validator (imports, forbidden patterns, audio frame offset, layout contract, full `tsc`) runs on every generated component. tsc errors get summarized and fed back into the next prompt.

- A SHA-1 fingerprint over the directive + prompt template + style helpers + model name caches results to disk. Re-renders are basically free.

- A live registry maps `scene_id → component_file` per video and is bundled into Remotion at build time.

- Hardcoded scene dispatch handles only `ai_image` and a fallback path; everything else is dynamic.

The validators are the part I'm proudest of. Models will hallucinate. Models will reach for `Math.random()`. Models will wire `getAudioPulse` to the wrong frame. The validators pin them.

---

## What's Still Missing

I'm being straight again, because that's the only useful posture.

- **More retries don't always help.** When tsc rejects a component three times, the model often digs deeper into the same wrong assumption. I'm experimenting with throwing away the conversation and re-prompting clean.

- **Prompt template is doing too much.** 30+ constraints in one block is a recipe for the model skimming. I want to split the prompt into a "must" block and a "should" block, and let the validator catch the "should" violations as soft warnings.

- **No multi-scene composition yet.** Each scene is generated in isolation. The next mile is letting the model see the immediate previous and next scene's TSX so it can choreograph a cross-scene visual cue (shared accent, continuing camera motion, complementary palette shift).

- **Codex image style consistency** across a single video isn't enforced yet — every scene is a fresh prompt. A "video-wide style header" prepended to each prompt is the next obvious move.

- **Mid-video retention.** The 22% mid number is the metric I'm watching. v3 should bend that curve. If it doesn't after a 14-day window, the assumption is wrong and I rework.

---

## What's Next

- **Visual continuity layer** — give the codegen the previous and next scene as context so transitions don't reset visually.

- **Soft-warning prompt** — split constraints into hard fails and soft warnings, retry on hard, pass on soft.

- **Codex image global style memo** — one style header propagated across every IMAGE_PROMPT in a single video for visual coherence.

- **Auto-retention follow-up** — re-pull analytics 14 days post-launch and append a "what actually happened" section to this article instead of leaving it at "early signs."

- **A direct A/B fork** — render the same script through v2 and v3, post both, and let the algorithm referee.

The goal hasn't changed. Watch a finished video and not be able to tell whether a human edited it. After v3, that goal feels less like a moonshot and more like a calibration problem.

---

## Watch It

**[OpenAI Just Killed the API (And No One's Noticed)](https://youtu.be/lOfUFrNG_W4)** — the first video from the new pipeline. Every UI scene is a React component Gemini 3.1 Pro Preview wrote. Every photoreal background is a GPT Image 2 plate. Every cut is timed to the narration's word-level timestamps.

Compare it to the [v2 launch video](https://youtu.be/ZxGGx1Yw6qU) ("NVIDIA Just Exposed the Enterprise AI Scam") and the [v1 launch video](https://rayastats.com/en/blog/i-built-a-system-that-creates-youtube-videos-from-scratch-here-s-what-happened) and the trajectory speaks for itself.

---

## Why I'm Documenting This

I'm a freelance full-stack developer. I build [Ruby on Rails](https://rayastats.com/en/services/ruby-on-rails-development) and [Next.js](https://rayastats.com/en/services/nextjs-development) products for clients. This pipeline isn't a client project — it's the place where every interesting question I have about AI agents, code generation, video, and validation gauntlets all collide. Building it in public is the cheapest way to find out which of my assumptions are wrong.

If you're curious about how I think about building things:

- [v2 — ripping out stock footage and rebuilding the visual layer in React](https://rayastats.com/en/blog/what-if-the-biggest-problem-with-ai-video-isnt-the-ai)

- [v1 — the original pipeline from scratch](https://rayastats.com/en/blog/i-built-a-system-that-creates-youtube-videos-from-scratch-here-s-what-happened)

- [What it takes to become a software developer](https://rayastats.com/en/blog/blog-post-1-what-it-takes-to-become-seftware-developer)

- [Scoring the first developer job](https://rayastats.com/en/blog/blog-post-2-scoring-the-first-developer-job)

---

## The Question I'm Sitting With

When AI writes the script, the voice, the React component for every scene, **and** the photoreal backgrounds — what's left for the human?

The answer I keep landing on: the **directive**. The contract. The list of 30 constraints that exist because of past failures. The fingerprint that says "this is the same input, give me the same output." The 5-step validator that refuses to let bad TSX near the renderer. The decision about which two AI surfaces to compose, and which one wins for which scene.

The human isn't being replaced. The human is being *moved up the stack* — out of writing the components and into writing the rules the components are written under.

That's the part I'm most excited about.

---

*[Iliya Oblakov](https://rayastats.com/en) is a freelance full-stack developer specializing in [Ruby on Rails](https://rayastats.com/en/services/ruby-on-rails-development) and [Next.js](https://rayastats.com/en/services/nextjs-development) development, based in Bulgaria. He builds web products, SaaS tools, and automation systems for clients worldwide. The YouTube channel where these iterations ship is [Nano Atlas](https://www.youtube.com/@NanoAtlas).*